Google’s Gemini AI employs a transformer decoder architecture optimized for efficiency and multimodal learning, enabling it to process and reason across multiple data types such as text, images, audio, video, and code natively from training onward. This architecture supports real-time, scalable performance and complex reasoning tasks by integrating advanced attention mechanisms, feed-forward networks with GeGLU activations, and chain-of-thought reasoning capabilities.

Key technical aspects of Gemini’s transformer architecture and multimodal learning include:

-

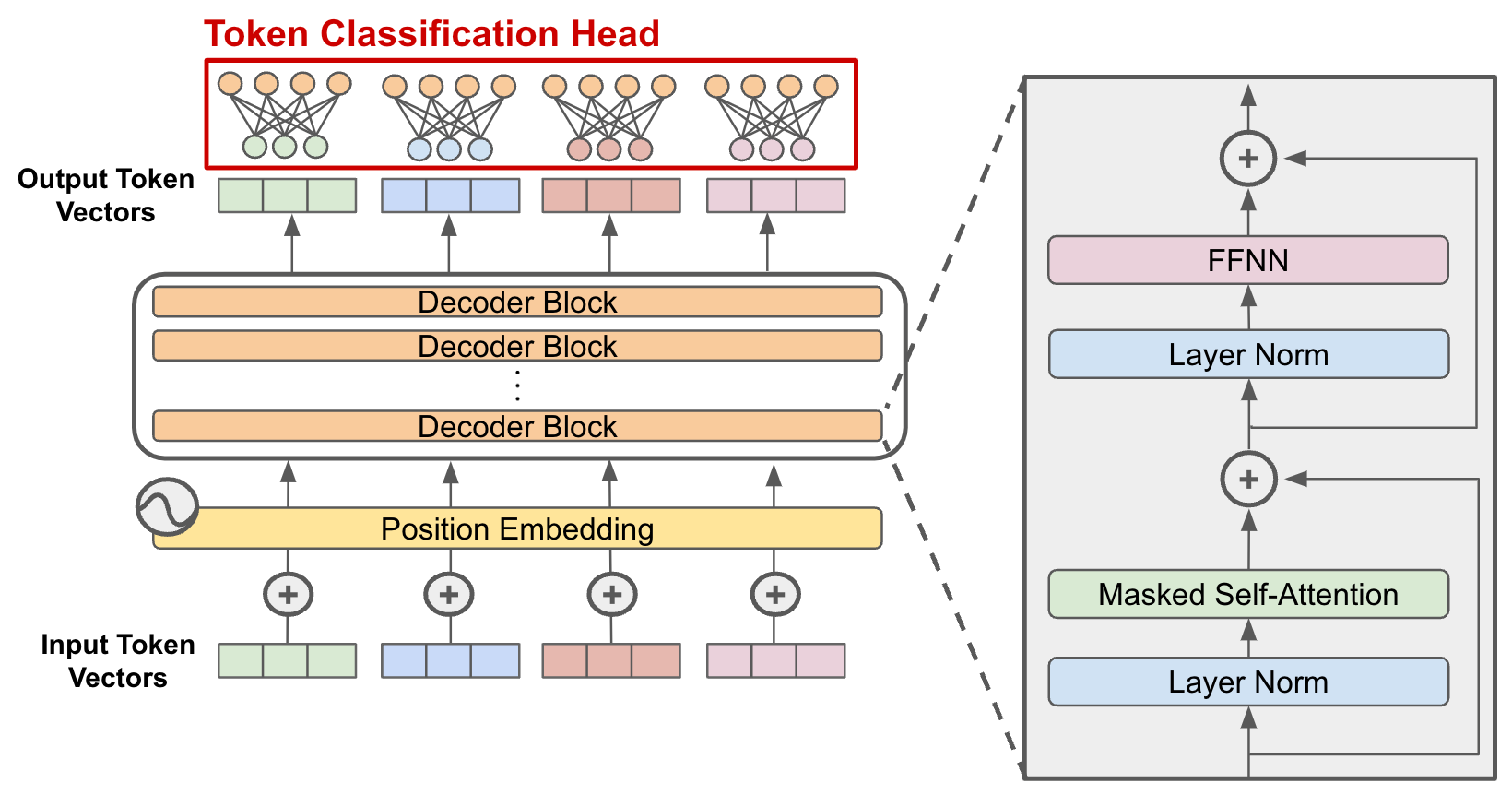

Transformer Decoder Architecture: Gemini uses a stack of transformer decoder blocks, each containing causal self-attention layers and feed-forward neural networks. These blocks process input tokens sequentially, predicting the next token in a sequence. The feed-forward layers apply pointwise transformations with non-linear activations (GeGLU in Gemini) to enhance expressive power.

-

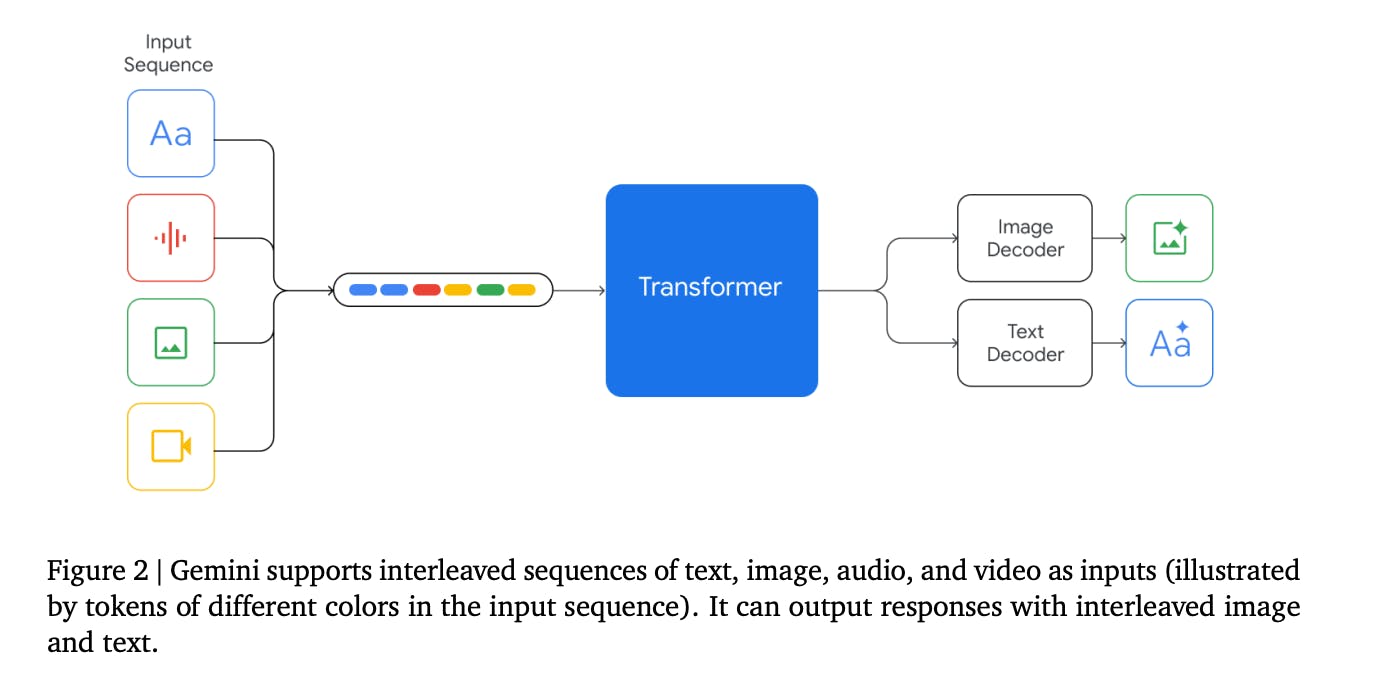

Native Multimodality: Unlike many prior multimodal models that combine separately trained unimodal models, Gemini is trained from scratch on multimodal data encompassing text, images, audio, video, and code. This native multimodal training enables seamless reasoning across modalities and the generation of interleaved outputs (e.g., text and images together).

-

Multimodal Input and Output: Gemini can accept and produce interleaved sequences of multiple data types, such as text, images, and audio, allowing it to handle complex tasks involving multiple sensory inputs and outputs in real time.

-

Chain of Thought Reasoning: The architecture supports breaking down complex problems into stepwise reasoning, improving performance on tasks requiring deep understanding, such as coding, medical text analysis, and customer service applications.

-

Hardware and Scalability: Gemini leverages Google Cloud TPU v5p for accelerated training and inference, and integrates with Kubernetes for scalable deployment in cloud environments.

-

Advanced Attention and Activation Functions: The model uses multi-head self-attention to capture contextual relationships across tokens regardless of their position. The feed-forward networks use GeGLU (Gated Linear Unit variant) activations instead of standard ReLU, enhancing the model’s ability to learn intricate patterns.

-

Comparison to Other Models: Gemini pushes the boundaries of multimodal AI by natively integrating multiple modalities and supporting complex reasoning, contrasting with models like LLaVA that combine pretrained unimodal models post-hoc. It also competes with Claude and ChatGPT in reasoning accuracy and conversational fluency, respectively.

In summary, Gemini’s transformer architecture is a decoder-only transformer designed from the ground up for native multimodal learning, combining advanced attention mechanisms, efficient feed-forward layers with GeGLU activations, and chain-of-thought reasoning to handle diverse and complex multimodal tasks with real-time scalability.

Maple Ranking offers the highest quality website traffic services in Canada. We provide a variety of traffic services for our clients, including website traffic, desktop traffic, mobile traffic, Google traffic, search traffic, eCommerce traffic, YouTube traffic, and TikTok traffic. Our website boasts a 100% customer satisfaction rate, so you can confidently purchase large amounts of SEO traffic online. For just 720 PHP per month, you can immediately increase website traffic, improve SEO performance, and boost sales!

Having trouble choosing a traffic package? Contact us, and our staff will assist you.

Free consultation